The team

Lars Løberg Monstad

Chief Executive Officer & Co-Founder

Lars is a highly skilled Machine Learning Engineer and Full-Stack Developer specializing in music information retrieval. He has published several articles in the field and developed advanced AI models for music analysis and transcription. As CEO and Co-Founder, he drives the company’s vision and product strategy, combining deep technical expertise with entrepreneurial leadership.

Dr. Olivier Lartillot

Chief Science Officer & Co-Founder

Leading researcher at UiO’s RITMO Centre for Interdisciplinary Studies in Rhythm, Time and Motion and professor at the MishMash Center for AI and Creativity. Pioneered the hybrid transformer-rule system approach that forms the core of our transcription technology. Over 20 years of experience in computational musicology and MIR (Music Information Retrieval).

Karstein Grønnesby

Chief Operating Officer

Karstein holds a Master’s in Music Technology from the University of Oslo and brings years of experience coordinating projects and productions in the music sector. He oversees project management and leads the B2B side of our product, drawing on his background from Samspill International Music Network and Norsk Viseforum. He is also the founder of Blåsemaker, a workshop dedicated to crafting traditional Norwegian wind instruments.

Our Mission

We believe that music transcription should be accessible to everyone - from students learning their favorite songs to professional musicians preserving their compositions. Our AI technology bridges the gap between audio and notation, making sheet music creation as simple as recording a performance.

Supported by the Norwegian Research Council

Our Research

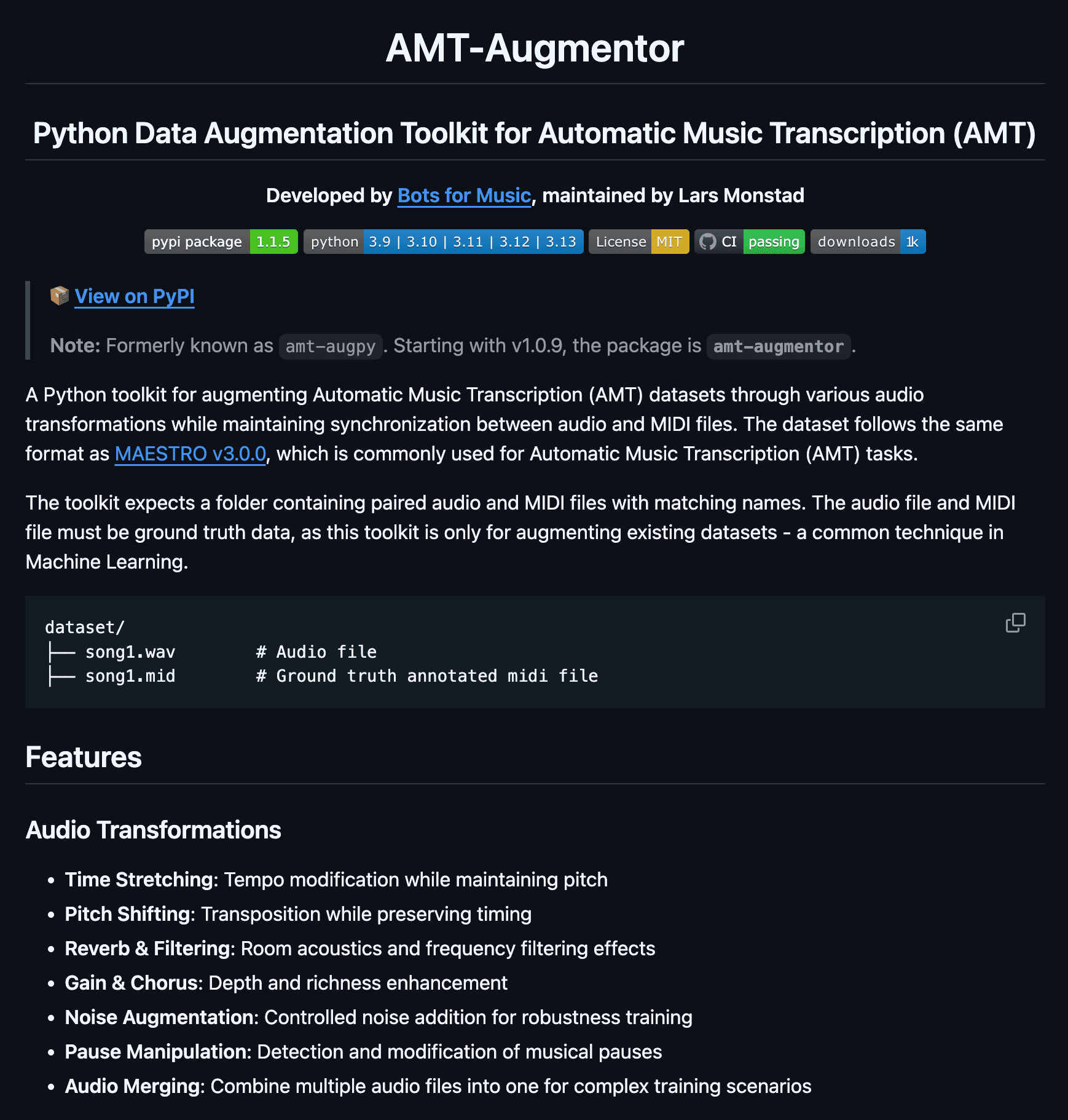

AMT-Augmentor

AMT-Augmentor is a Python toolkit for expanding Automatic Music Transcription (AMT) datasets with high-quality audio transformations—time-stretching, pitch-shifting, reverb/filtering, noise, gain/chorus—while preserving audio–MIDI alignment. It’s MAESTRO-compatible, CLI-driven, and configurable via YAML, with parallel processing and built-in dataset validation/splitting to speed up robust AMT model training. Open-source (MIT).

View on GitHub

HF2: Hardanger Fiddle Dataset

An open paired audio–MIDI dataset of Norwegian Hardanger fiddle recordings, built at the University of Oslo for training and evaluating audio-to-MIDI transcription models. Extends the original HF1 dataset to 119 audio–MIDI pairs across 39 unique songs (90,325 annotated notes, ~970 MB), and introduces emotional variants — each song performed in five interpretations (original, angry, happy, sad, tender) — alongside CSV ground truth with high-precision pitch data. A research dataset for the music information retrieval community.

View on GitHubRecent publications

See our research model in action

Live transcription on Hardanger Fiddle Music at the MishMash Centre for AI and Creativity in Oslo · 08.04

In the press

Shifter · Norwegian tech news